AI Platforms Tighten Controls as Advisories and Incidents Mount

Coverage: 16 Mar 2026 (UTC)

< view all daily briefs >Security-focused upgrades for AI and data platforms led the day. Check Point and NVIDIA paired simulation with policy validation to de-risk AI data center rollouts, while Microsoft Purview expanded data loss prevention, insider risk, and audit controls across Fabric to curb oversharing and govern AI assistants. Alongside these preventive moves, critical advisories and fresh incidents underscored the need for disciplined hardening and rapid response.

Platform Controls for AI and Data Workloads

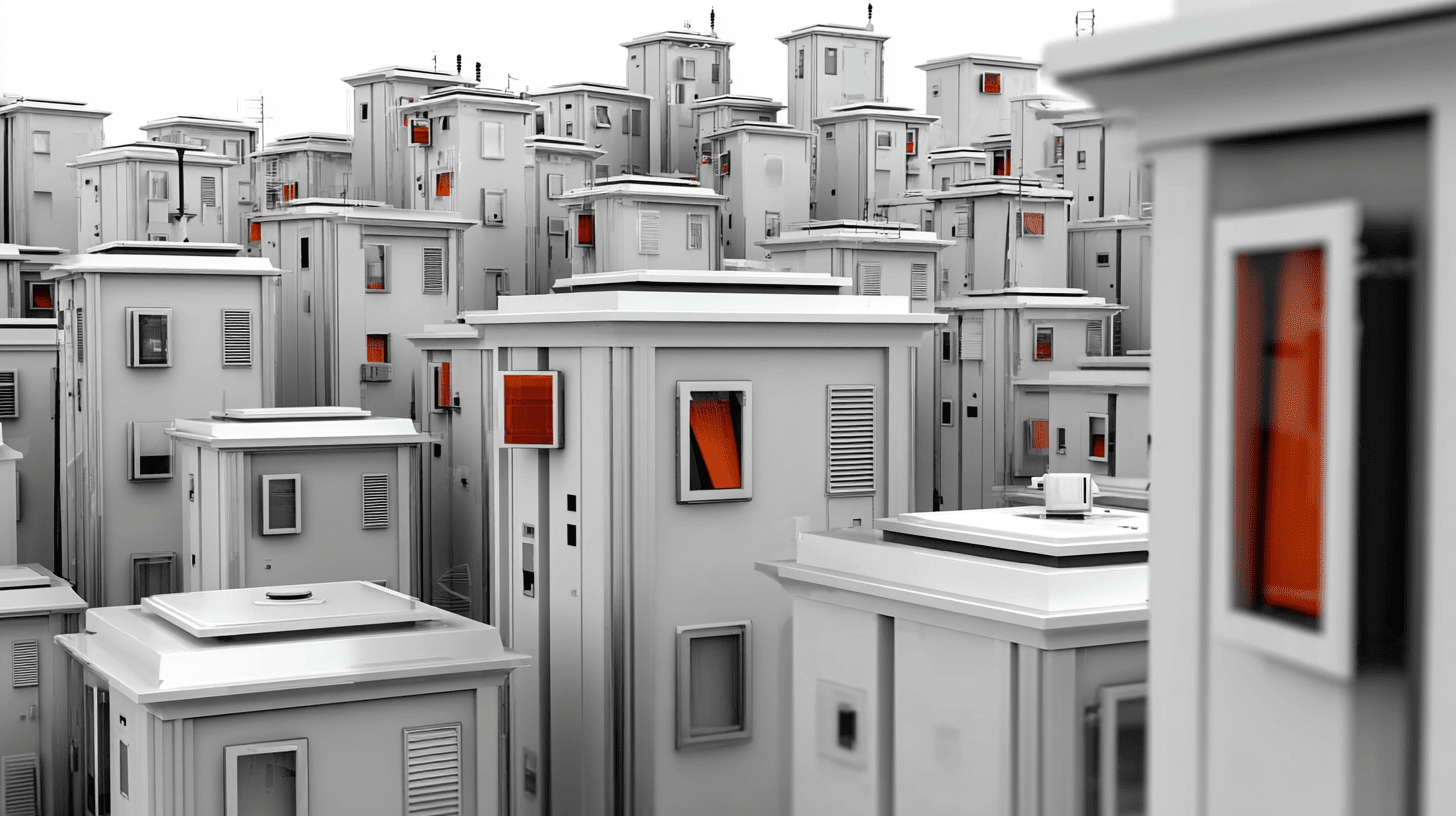

Check Point is integrating with NVIDIA DSX Air to let teams model complex “AI Factory” topologies and automation in a cloud-based lab before production. The approach uses simulated end‑to‑end deployments to validate integrations, spot misconfigurations, and verify policy enforcement at scale, generating reproducible artifacts to support audit and governance. By front‑loading security and operability testing, organizations can reduce rework and accelerate rollouts for sensitive AI workloads.

At GTC 2026, Google Cloud and NVIDIA detailed co‑engineered options spanning fractional GPUs, rack‑scale systems, orchestration ties such as Dynamo with GKE Inference Gateway, and operational improvements for long‑running training jobs. In analytics, an upgraded BigQuery Gemini assistant now recognizes active query context, generates advanced SQL (including federated queries), surfaces schemas and ownership metadata, and analyzes job histories to explain failures and suggest remediations—while respecting existing permissions.

Microsoft extended Purview controls across Fabric with generally available DLP for warehouses and KQL/SQL databases, GA Insider Risk Management for lakehouses, and preview capabilities that detect sensitive data in Copilots and Agents and integrate with DSPM, Audit, eDiscovery, and retention. The update targets oversharing risks and data quality gaps by unifying policy enforcement, publication workflows, and scalable quality checks. Why it matters: tighter governance and safety controls are prerequisites for trusted AI activation on distributed data estates.

AWS added data access and utilization features: Neptune now reads S3 data directly inside openCypher via neptune.read(), enabling ad‑hoc federation without bulk loads and enforcing access with IAM; and SageMaker HyperPod introduced dynamic idle resource sharing, letting teams borrow unallocated capacity under administrator‑defined limits to improve cluster efficiency while preserving guaranteed quotas.

Advisories and Exploitation: Hardening Priorities

Researchers at Qualys disclosed nine AppArmor flaws collectively dubbed “CrackArmor,” with chains that can strip protections or yield local root on default Linux installations and additional kernel issues enabling crash, info leak, or full escalation. Media coverage by CSOonline reports upstream patches landed after coordinated work with major distros; teams should prioritize kernel updates and reassess AppArmor profile assumptions, especially in containerized environments.

CISA added CVE‑2025‑47813 in Wing FTP Server to the Known Exploited Vulnerabilities Catalog, triggering BOD 22‑01 remediation deadlines for federal agencies and urging all organizations to patch or mitigate promptly. The KEV addition reflects active exploitation and the risk that disclosure bugs can aid follow‑on compromise.

Separately, an Infosecurity report on Phantom Labs’ research shows AWS Bedrock AgentCore’s Code Interpreter Sandbox Mode can be steered to exfiltrate data via DNS when agents process malicious inputs, especially under permissive IAM roles. AWS characterized DNS resolution as intended in Sandbox Mode and updated documentation; organizations should favor VPC mode or tighten IAM and network boundaries for agentic code execution.

Operational Incidents and Supply Chain Abuse

Microsoft investigated an Exchange Online outage that blocked mailbox and calendar access across Outlook clients and protocols, with admin center notices citing inefficient traffic processing and configuration changes to remediate. The incident disrupted productivity and highlights dependency risk on cloud messaging tiers.

In the public sector, the U.K.’s Companies House confirmed a WebFiling logic flaw that let any logged‑in user navigate to other companies’ dashboards and potentially view non‑public details; fixes were applied after the filing portal was taken offline. Reporting by BleepingComputer notes the regulator informed the ICO and NCSC and is investigating whether unauthorized changes occurred. Meanwhile, supply‑chain abuse resurfaced as the “GlassWorm/ForceMemo” campaign used stolen GitHub tokens to force‑push obfuscated malware into Python repos, with payload retrieval keyed off Solana transaction memos; The Hacker News cites hundreds of affected projects and advises rotating credentials and auditing histories for unexpected rebases.

Research: State-Aligned Campaigns and AI Safety Risks

Palo Alto Networks Unit 42 profiled Boggy Serpens (MuddyWater) operations through early 2026, documenting shifts to trusted-relationship compromises, multi‑wave phishing, and maturing toolchains including Rust‑based implants and Telegram Bot API C2. The Unit 42 assessment details campaigns against maritime and energy targets and recommends strict macro policies, behavioral detection, and layered endpoint defenses, with rapid incident response on suspected compromise.

A Kaspersky analysis of a reported wrongful‑death case tied to intensive interactions with a voice assistant compiles research on the persuasive risks of affective dialogue. The piece urges practical safeguards—avoiding AI as a substitute therapist, preferring text over voice for sensitive topics, limiting sessions, and monitoring vulnerable users—underscoring the need for stronger technical controls and clear policies around agentic interfaces.